IHD-Experimentation Integration

Version Control

| Version | Date | Who | What |

|---|---|---|---|

| 0.1 | 29/01/26 | Saji Varghese & Patricia Nieto | Draft |

Document Overview

| Decision required | |

|---|---|

| Decision Outcome | |

| Owner | Saji Varghese & Patricia Nieto |

| Current Status | Draft |

| Decider(s) |

1. Context

We are looking to integrate our IHD flow with Experimentation (LES/Loop SDK), so that experiments can be run using our messaging platform.

Depending on the experiment a customer is a part of, this would enable us to do things like:

- Sending different message templates

- Having different message preferences (toast vs full screen)

2. Decision Driver

As per above.

3. Considered Options

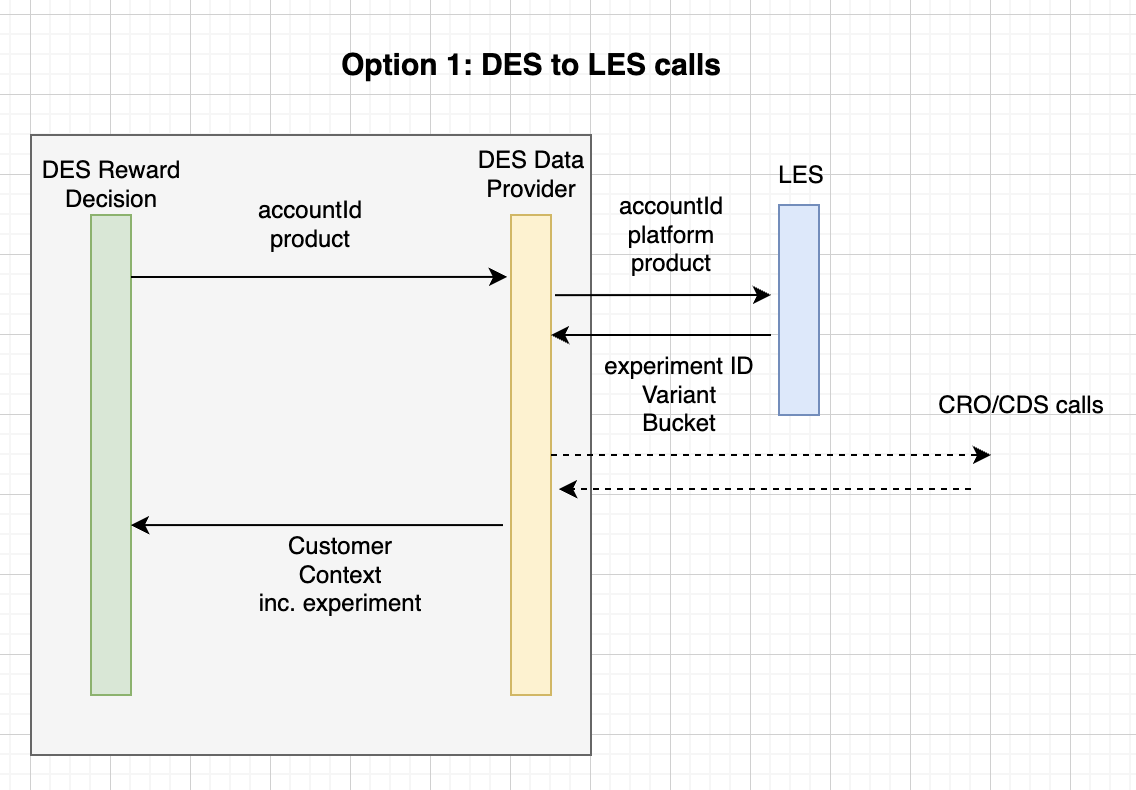

Option 1: DES to LES calls

We include a LES client in DES Data Provider that we can use (using accountId, platform and product) to get the experiment id and variant.

It makes sense to call LES from DES since we already have other clients for CRO/CDS.

We then use the decision table in DES to send the appropriate message to the customer.

Benefits

- Simple implementation

Drawbacks

- Higher maintenance of the LES client

- Experiments will not be logged (exposed) unless we implement an LPS client to handle the logging (higher maintenance).

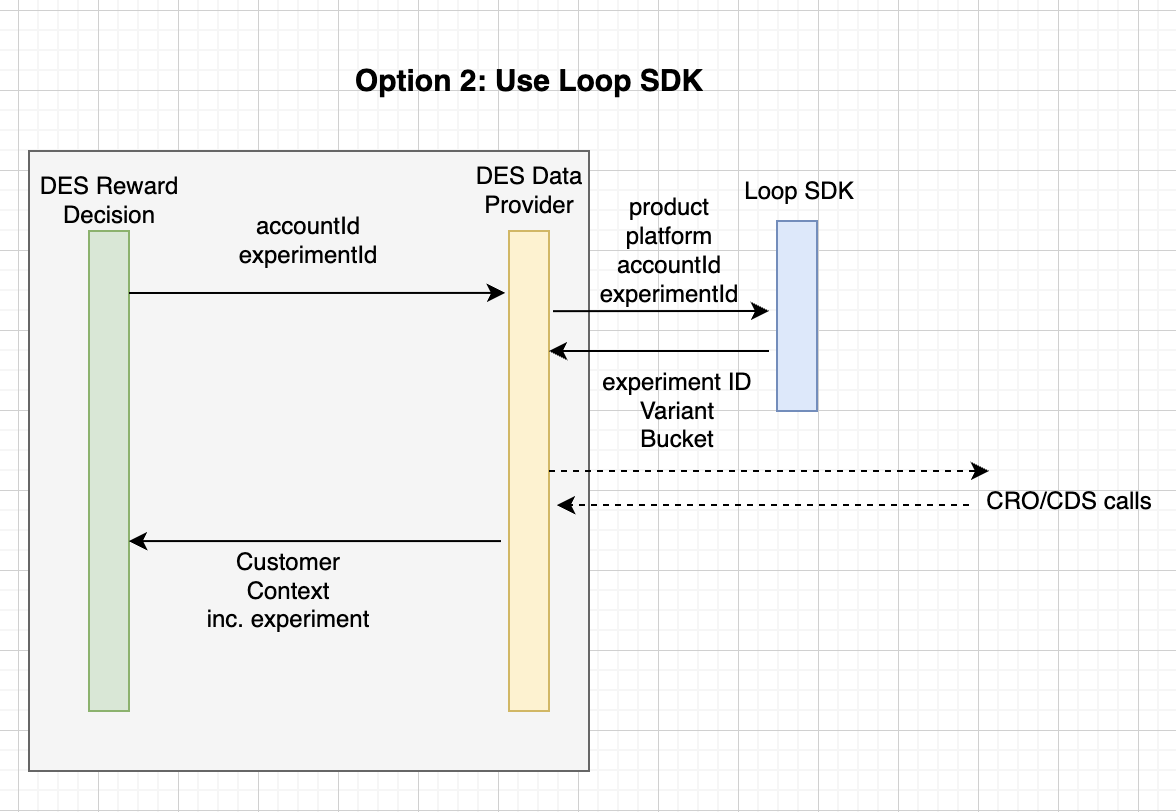

Option 2: Use Loop SDK

When adding a new experiment to the decision table manually, we will have the experimentID, which we can then use alongside the accountID, to call Loop SDK and get the variant. Note that if customer is not in the right Programme and Initiative (NA) we do not send anything, and the same happens for non-uki customers, so we would still use loop SDK but then not do anything with that information.

- We do a prevalidation and identify which experiment id applies given the RewardType (manually configured)

- Call Loop SDK to get customer's experiment variant

- We call Data provider for full customer context and experiment

- Return decision

Benefits

- Use loop SDK -> easier maintenance & register exposure through LPS (without needing to use BCG).

Drawbacks

- In case of decision not to send a message to a particular customer (due to NA Programme/Initiative or non-uki customer) we would

make an unnecessary call to loop SDK.

- This might result in an experiment being logged as shown to a customer (published to a kafka by LPS

for experiment exposures), even if we then can't use it (message is not sent).

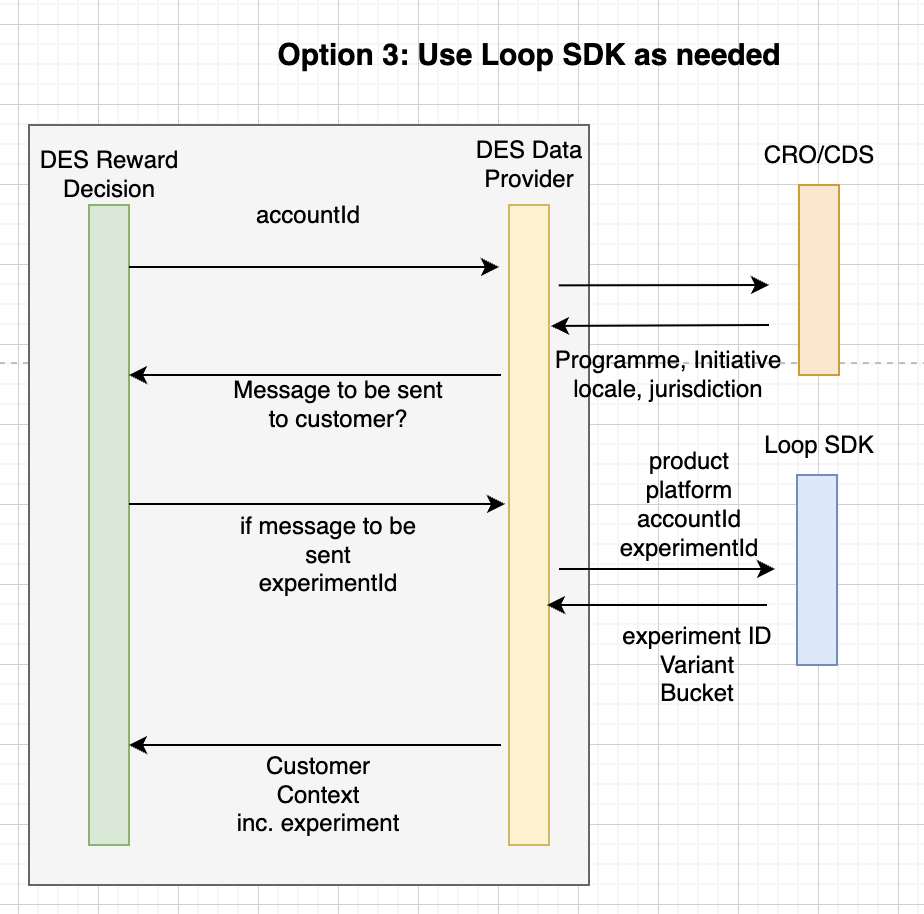

Option 3: Use Loop SDK only as needed

- We would call Data Provider to get CRO/CDS input, which would give us the information we need to know if a message will be sent to a customer

- If message is to be sent to customer and we have an experimentId for that rewardType, then we would call Loop SDK to get the variant. Otherwise, no call is made.

- We would then call Data Provider again to get customer context.

- Return decision

Benefits

- No unnecessary calls to Loop SDK

- No unwanted experiment exposure logging

Drawbacks

- Two calls to DES Data Provider

4. Team discussion

During a team discussion regarding the above options, Haohong Lin proposed the option of having a database with tables mapping rewardType-experiment,

but we'd also need another table that would have the variant.

The benefit of this would be not having to deploy the service every time a change in experiments is requested. Haohong is concerned about frequent

deployments of the service if this information is hardcoded in the decision tables.

Saji explains that this would be temporary in order to bring quick value to the company, but in the future we would want

to move to a better way of doing things.

Future options - We could just have an XML file in the config that is then mounted to DES. Kogito could then be used to

expose a UI where people can just change the experiment instead of doing it manually on the codebase.

Haohong has concerns unit tests not working anymore as the XML would be dynamic -> Saji mentions that we

should build a process around it, a tool that tests what has been written in the XML to make sure it's correct

(for example a Github Actions pipeline instead of using the Kogito unit tests that we have at the moment).

The best option Saji proposes for later on is to use Kogito DMN and deploy different services according to the needs

(e.g. a service for gaming which instead of using rewardType, they would want to just run one experiment per accounId),

this provides a rest endpoint to call into.

In this case, we would not have DES and instead we would have another service running Kogito with the data table.

Data Provider would get CRO/CDS and then send it to Kogito server, which would send the response returning the decision.

The team agrees that option 3 would be the most suitable in the short term, being quick to implement and functional and bringing value to the company, but with views into refactoring in the future, given that the use cases increase.

5. Decision

Adopt: Option 3: Use Loop SDK only as needed

Rationale:

- No unnecessary calls to Loop SDK if a message is not to be sent to customer -> no unnecessary exposure logging

- The flow would remain as it is if there is not experimentId in the table

- Less maintenance of the client required than if we were to call LES directly

6. Consequences

Positive

- Incorporating experiments into the messaging flow and the possibilities it opens for experimentation

Negative

- Increased ongoing dev work after this first iteration, since every new experiment/change of experiment will require a change of

the decision table and a new deployment -> close communication between teams required

- We will need to refactor our solution in the future, likely requiring a substantial amount of work

7. Update

After starting this work and attempting to integrate the Loop SDK client in the DES Data Provider, we realised that the implementation was far more complex than initially expected. This was due to needed to implement our own caches, HttpClient, etc. Wiring this into the given client was more complex and difficult to read than adding them directly to Quarkus. Thus, the team got together again to discuss further options.

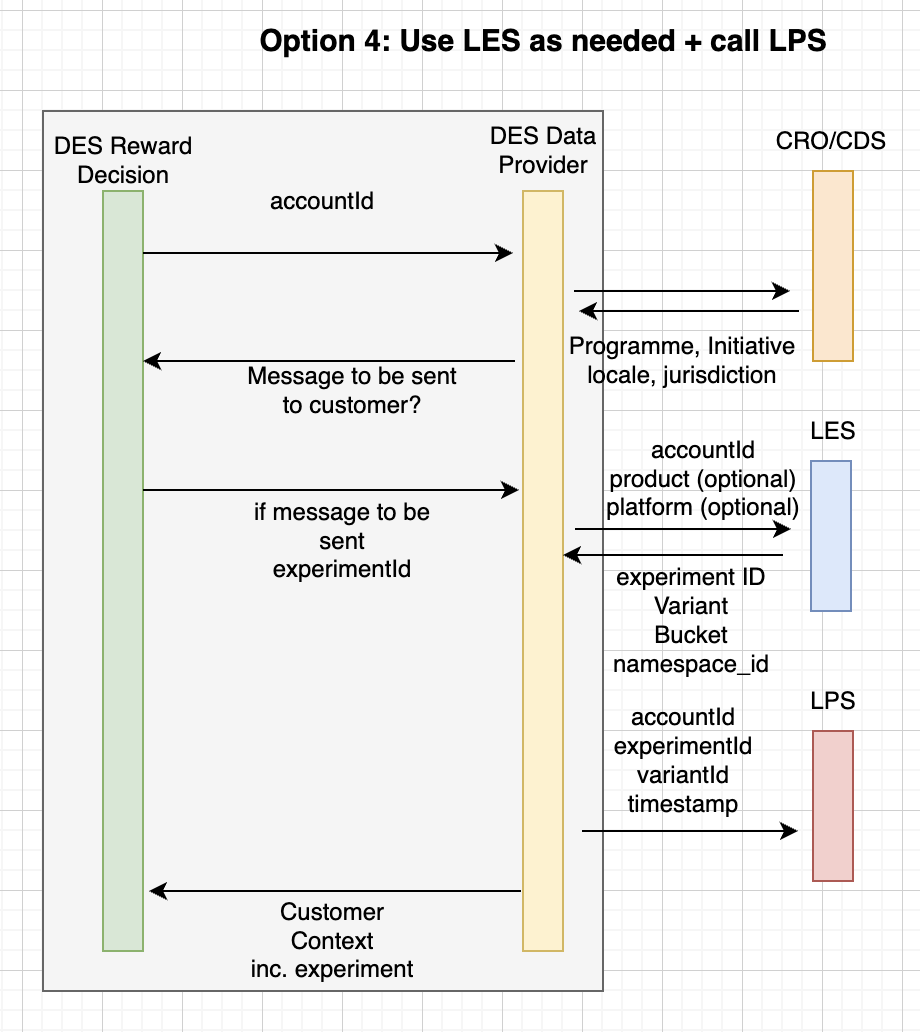

Option 4

This option reuses the flow of the previously discussed Option 3, but calling LES directly instead of through Loop SDK. Then,

we'd call LPS OR send a message to the kafka stream to log exposure.

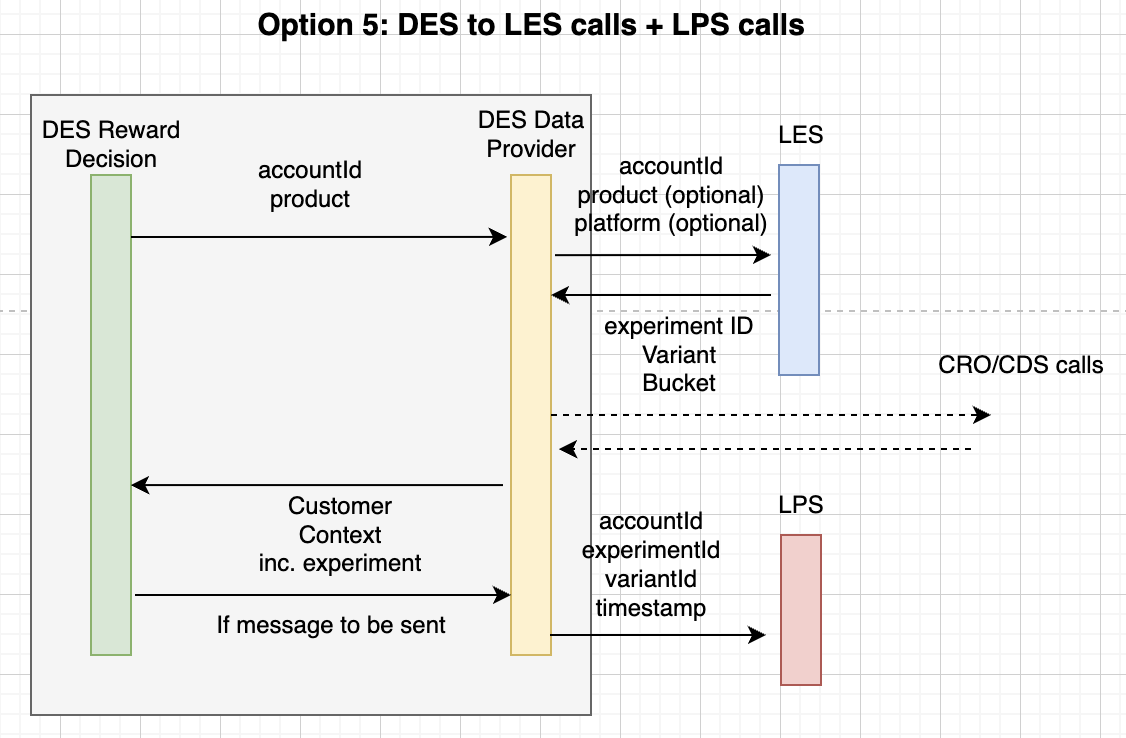

Option 5

This option reuses the flow of the previously discussed Option 1, but with the added step of calling LPS OR sending a message

to the kafka stream to log the exposure.

8. Decision

The team decides to use Option 4 and call LPS directly for the time being. Looking forward and with the purpose of better scaling, it was discussed to implement a Kafka producer in the future in order to log the exposure without depending on LPS.

We will therefore call the V3 LES endpoint with accountId (mandatory) +/- product and platform (both optional). Jurisdiction might also be included as an optional parameter at some point. We will be caching the response from LES, using the params we used in the request as key.